Langgraph output streaming

I check the below posts but combing both is kinda hard and not working for me... please help

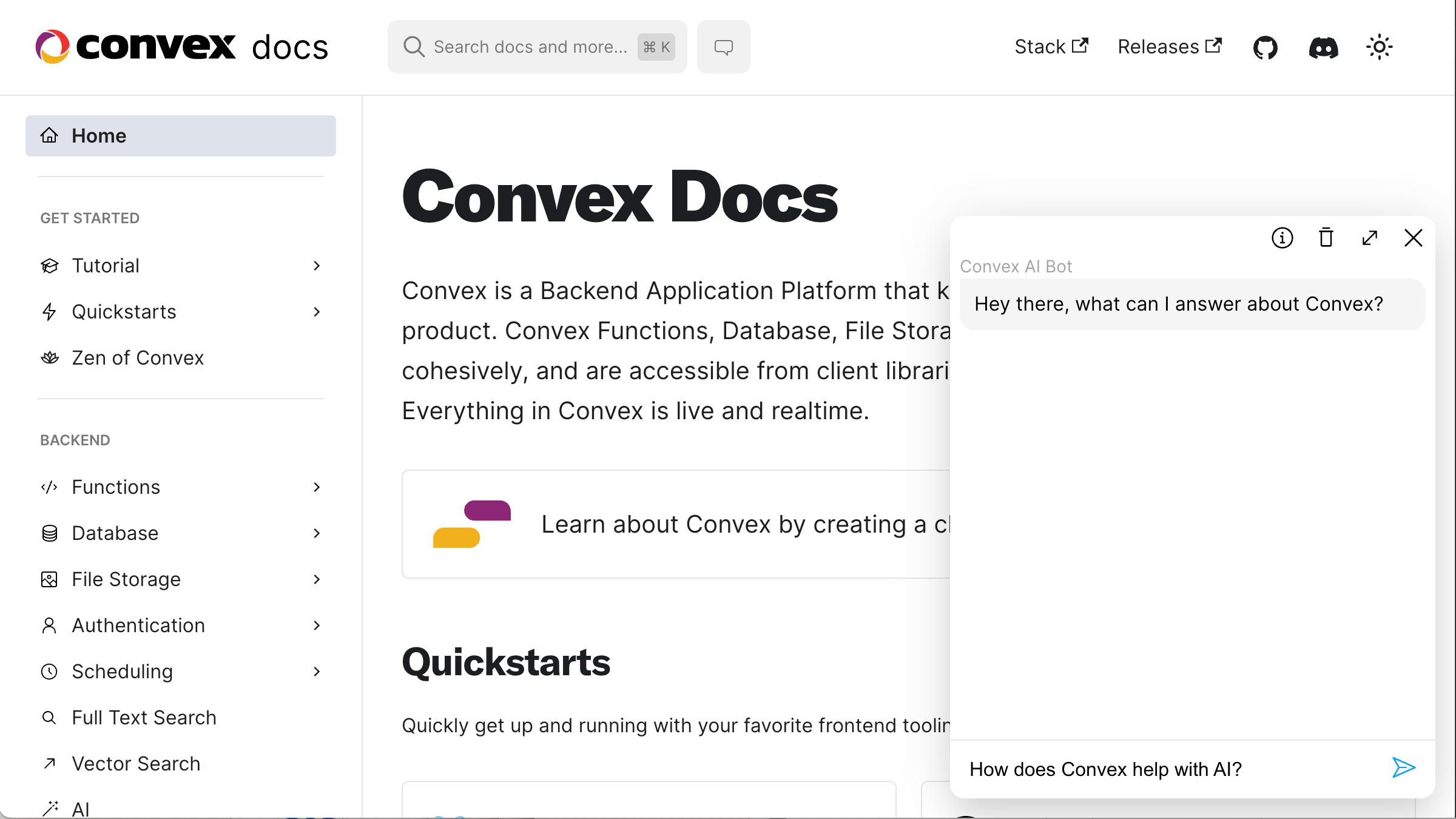

https://stack.convex.dev/ai-chat-using-openai-assistants-api

https://stack.convex.dev/streaming-vs-syncing-why-your-chat-app-is-burning-bandwidth